Discover the practical aspects of implementing deep-learning solutions using Python, bridging the gap between theory and industry practice with readily available resources like PDFs․

What is Deep Learning?

Deep learning, a subset of machine learning, utilizes artificial neural networks with multiple layers (hence “deep”) to analyze data with increasing levels of abstraction․ These networks are inspired by the structure and function of the human brain, enabling them to learn complex patterns․ Resources like “Deep Learning with Python” by François Chollet, available as a PDF, provide a comprehensive understanding of these concepts․

Unlike traditional machine learning requiring manual feature extraction, deep learning algorithms automatically discover relevant features from raw data․ This capability is particularly powerful when dealing with unstructured data like images, text, and audio․ The availability of frameworks like TensorFlow and Keras, coupled with accessible learning materials in PDF format, democratizes access to this powerful technology, allowing developers to build sophisticated models․

Why Python for Deep Learning?

Python’s prominence in deep learning stems from its simplicity, readability, and extensive ecosystem of libraries․ Frameworks like TensorFlow and Keras are Python-based, offering intuitive APIs for building and training neural networks․ The availability of resources, including the popular “Deep Learning with Python” book in PDF format, further solidifies Python’s position․

Furthermore, Python boasts a vibrant community, providing ample support and pre-built tools for data manipulation, visualization, and scientific computing․ Jupyter Notebooks, commonly used for deep learning projects, seamlessly integrate with Python, facilitating experimentation and collaboration․ The wealth of online courses and tutorials, often accompanied by downloadable PDFs, makes Python an ideal choice for both beginners and experienced practitioners seeking to delve into deep learning․

Setting Up Your Environment

Begin by installing Python and essential libraries; explore cloud or local environments, and familiarize yourself with TensorFlow and Keras, often detailed in PDF guides․

Installing Python and Essential Libraries

Embarking on your deep learning journey with Python necessitates a properly configured environment․ Start with a recent Python installation – version 3․7 or higher is recommended – ensuring it’s added to your system’s PATH․ Crucially, you’ll need to install core libraries like NumPy for numerical computation, and Pandas for data manipulation․

For deep learning specifically, TensorFlow and Keras are paramount․ Utilize pip, Python’s package installer, with commands like pip install tensorflow and pip install keras․ Many excellent resources, including comprehensive PDF guides, detail these steps․ Consider using a virtual environment (like venv) to isolate project dependencies․

Furthermore, Matplotlib and Seaborn are invaluable for data visualization, aiding in understanding model performance․ Documentation and tutorials, often available as downloadable PDFs, will guide you through each installation process, ensuring a smooth setup for your deep learning endeavors․

Popular Deep Learning Frameworks: TensorFlow and Keras

TensorFlow, developed by Google, stands as a cornerstone of deep learning, offering robust tools for numerical computation and large-scale machine learning․ Its flexibility allows for complex model building, though it can present a steeper learning curve․ Keras, often described as a high-level API, runs atop TensorFlow (or other backends) simplifying model creation and experimentation․

Many resources, including detailed PDFs, showcase Keras’ user-friendliness, making it ideal for rapid prototyping․ TensorFlow provides greater control for advanced users, while Keras prioritizes ease of use․ Both frameworks benefit from extensive documentation and community support․

Exploring downloadable PDFs and online tutorials focused on TensorFlow and Keras will accelerate your understanding․ These resources often include practical examples, enabling you to build and train models efficiently within the Python ecosystem․

Choosing Between Cloud and Local Environments

Deciding between cloud and local environments for deep learning hinges on resource availability and project scale․ Local development, utilizing your laptop, is suitable for smaller datasets and initial experimentation, often aided by downloadable PDFs detailing setup procedures․ However, deep learning demands significant computational power – GPUs are crucial․

Cloud platforms like AWS, Google Cloud, and Azure offer on-demand access to powerful hardware, eliminating the need for expensive local infrastructure․ They provide pre-configured environments, streamlining the setup process․ PDFs often guide cloud environment configuration․

Consider data privacy and cost․ Cloud solutions incur ongoing expenses, while local setups require upfront investment․ Many online resources and PDFs compare these options, helping you choose the best fit for your deep learning projects in Python․

Traditional Machine Learning vs․ Deep Learning

Traditional methods like regression and random forests contrast with deep learning’s neural networks; PDFs detail both, showcasing deep learning’s advantages for complex tasks․

Overview of Traditional Machine Learning Methods

Traditional machine learning encompasses a range of algorithms designed to learn patterns from data without explicit programming․ Methods like linear regression establish relationships between variables, while decision trees create branching rules for classification․ Random forests, an ensemble technique, combine multiple decision trees for improved accuracy and robustness․ Gradient boosting trees sequentially build models, correcting errors from previous iterations․

These methods often require careful feature engineering – the process of selecting and transforming relevant data attributes․ They typically perform well on structured data with clear features, but can struggle with high-dimensional or complex datasets․ PDFs detailing these techniques often highlight their limitations when compared to the automated feature extraction capabilities of deep learning, particularly when dealing with unstructured data like images or text․ Understanding these foundational methods is crucial for appreciating the advancements offered by deep learning․

The Advantages of Deep Learning

Deep learning excels where traditional methods falter, particularly with unstructured data․ Its key advantage lies in automated feature extraction, eliminating the need for manual engineering․ Convolutional Neural Networks (CNNs) automatically learn hierarchical features from images, while Recurrent Neural Networks (RNNs) process sequential data like text with remarkable efficiency․

Deep learning models, often detailed in resources like “Deep Learning with Python” PDFs, can achieve state-of-the-art performance on complex tasks․ They scale effectively with large datasets, improving accuracy as data volume increases․ While requiring significant computational resources, cloud environments offer accessible solutions․ The ability to model intricate relationships and learn representations directly from raw data makes deep learning a powerful tool, driving innovation across diverse fields․

Deep Learning Models in Python

Explore standard feedforward networks, CNNs for image data, and RNNs for sequential data—all implemented in Python, with code examples often found in dedicated PDFs․

Standard Feedforward Neural Networks

Feedforward neural networks represent the foundational building block within the realm of deep learning, effectively processing typical data frames․ These networks, easily implemented in Python utilizing frameworks like TensorFlow and Keras, consist of interconnected layers of nodes․ Information flows in a single direction—from input to output—without looping connections․

Numerous resources, including comprehensive PDFs like “Deep Learning with Python” by François Chollet, detail the construction and training of these networks․ Jupyter Notebooks accompanying the book provide practical code samples, illustrating how to define layers, activation functions, and optimization algorithms․ Understanding these networks is crucial, as they serve as the basis for more complex architectures․ The availability of detailed documentation and practical examples in PDF format significantly eases the learning curve for aspiring deep learning practitioners․

Convolutional Neural Networks (CNNs) for Image Data

Convolutional Neural Networks (CNNs) excel at processing image data, leveraging specialized layers – convolutional, pooling, and fully connected – to automatically extract hierarchical features․ Python’s deep learning libraries, detailed in resources like the “Deep Learning with Python” PDF, simplify CNN implementation․ These networks identify patterns like edges, textures, and shapes, crucial for image recognition and classification tasks;

Jupyter Notebooks accompanying the aforementioned book offer practical examples, demonstrating how to build and train CNNs for image datasets․ Understanding concepts like filters, stride, and padding is vital․ PDFs and online courses provide step-by-step guidance, making CNNs accessible even to beginners․ The power of CNNs lies in their ability to learn spatial hierarchies directly from image pixels, surpassing traditional methods․

Recurrent Neural Networks (RNNs) for Sequential Data

Recurrent Neural Networks (RNNs) are designed to handle sequential data, where the order of information matters – think time series, natural language, or audio․ Python’s deep learning frameworks, extensively documented in resources like the “Deep Learning with Python” PDF, provide tools to build and train RNNs effectively․ Unlike traditional networks, RNNs possess ‘memory’ through recurrent connections, allowing them to process inputs while considering previous elements in the sequence․

The book’s accompanying Jupyter Notebooks showcase RNN applications, such as text generation and sentiment analysis․ Understanding concepts like backpropagation through time and vanishing gradients is crucial․ PDFs and online platforms offer detailed explanations and practical code examples․ RNNs are powerful for tasks where context is key, enabling models to understand and predict patterns in sequential data․

Resources for Learning Deep Learning with Python

Explore “Deep Learning with Python” PDFs, interactive books, and platforms like Simplilearn and Edureka to master Python’s deep learning ecosystem effectively․

“Deep Learning with Python” by François Chollet

François Chollet’s “Deep Learning with Python” is a cornerstone resource, frequently available as a PDF for convenient study․ This book expertly guides readers through the fundamentals and advanced concepts of deep learning, leveraging the power of the Keras framework – created by Chollet himself․ The third edition (2025), alongside its accompanying Jupyter Notebook repository, provides practical code samples for hands-on learning․

It’s a practical, hands-on exploration, bridging the gap between academic theory and real-world implementation․ Readers benefit from a clear, concise style, making complex topics accessible․ The book’s focus on Keras allows for rapid prototyping and experimentation, solidifying understanding through practical application․ Finding a downloadable PDF version facilitates offline access and study, making it an invaluable asset for aspiring deep learning practitioners․

Interactive Deep Learning Books and Courses

Numerous interactive resources complement traditional deep learning texts, often offering code examples readily adaptable to Python environments․ One standout is an interactive book with code, math, and discussions, implemented with PyTorch, NumPy/MXNet, JAX, and TensorFlow․ This resource has gained adoption at over 500 universities across 70 countries, demonstrating its effectiveness․

While a direct PDF download might not always be available for these interactive platforms, many offer downloadable code snippets and exercises․ These resources often provide a more engaging learning experience than static PDFs, allowing users to experiment and solidify their understanding․ Supplementing book-based learning with these interactive tools, alongside readily available deep learning PDFs, creates a robust learning pathway․

Online Platforms: Simplilearn and Edureka

Simplilearn offers a Professional Certificate in AI and Machine Learning, providing a structured curriculum for mastering deep learning with Python․ While a comprehensive PDF might not be the primary delivery method, course materials often include downloadable resources and supplementary documentation․

Edureka provides TensorFlow training, focusing on building and training neural networks in Python․ Their courses often feature downloadable code examples and project materials, effectively supplementing any foundational deep learning PDFs you might utilize․ These platforms offer a practical, hands-on approach, guiding learners through real-world applications and providing support throughout their learning journey․ Combining these courses with dedicated Python deep learning PDFs ensures a well-rounded educational experience․

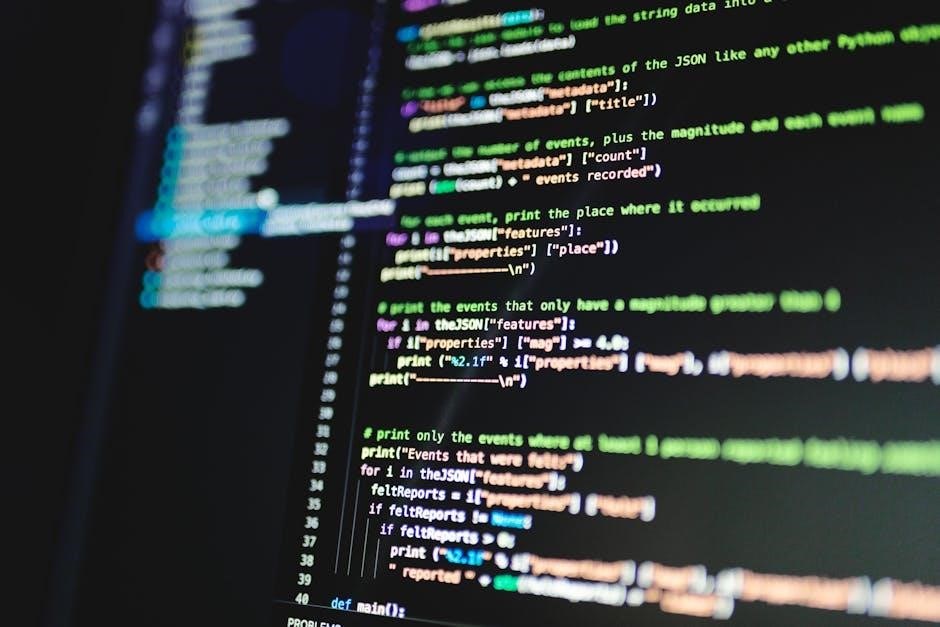

Practical Implementation and Code Examples

Explore Jupyter Notebooks implementing code from “Deep Learning with Python,” alongside a TensorFlow crash course for building and training neural networks in Python․

TensorFlow Crash Course

Embark on a focused TensorFlow crash course, designed to rapidly accelerate your ability to build and train neural networks using Python․ This intensive learning experience will guide you through the fundamentals of TensorFlow, enabling you to construct both classification and regression models effectively․ Resources like “Deep Learning with Python” and associated Jupyter Notebooks provide complementary code examples and practical implementations․

You’ll gain hands-on experience, moving beyond theoretical concepts to actively engage with the framework․ This practical approach, coupled with readily available PDFs detailing deep learning libraries, will solidify your understanding and empower you to tackle real-world deep learning challenges․ The course emphasizes a direct, code-first methodology, ensuring rapid skill development․

Jupyter Notebooks for Deep Learning Projects

Leverage the power of Jupyter Notebooks to streamline your deep learning projects in Python․ These interactive environments are ideal for experimentation, prototyping, and documenting your workflow․ A valuable resource is the repository containing code samples from “Deep Learning with Python,” third edition, offering practical implementations directly within notebooks․

Utilize these notebooks alongside readily available PDFs detailing deep learning frameworks to enhance your understanding and accelerate development․ Jupyter Notebooks facilitate a modular approach, allowing you to break down complex tasks into manageable steps․ They also promote reproducibility and collaboration, making them essential tools for any deep learning practitioner seeking efficient and organized project management․

Advanced Topics in Deep Learning

Explore hyperparameter tuning and model evaluation techniques to refine your Python deep learning projects, utilizing resources found in comprehensive PDF guides․

Hyperparameter Tuning

Optimizing deep learning models necessitates meticulous hyperparameter tuning, a process crucial for achieving peak performance․ These parameters, set before training begins, significantly influence the learning process and final model accuracy․ Resources like “Deep Learning with Python” by Chollet and Watson, often available as a PDF, detail techniques such as grid search, random search, and Bayesian optimization․

Effective tuning involves systematically exploring different combinations of hyperparameters – learning rate, batch size, number of layers, and regularization strength – to identify the configuration yielding the best results on validation data․ PDF documentation frequently provides practical examples and code snippets demonstrating these methods within Python frameworks like TensorFlow and Keras․ Understanding the interplay between hyperparameters and their impact on model behavior is paramount for successful deep learning implementation․

Model Evaluation and Validation

Rigorous model evaluation and validation are essential steps in any deep learning project, ensuring generalization to unseen data․ Utilizing Python and frameworks like TensorFlow, techniques such as k-fold cross-validation and hold-out validation sets are commonly employed․ Resources, including the “Deep Learning with Python” book often found as a PDF, emphasize the importance of appropriate metrics – accuracy, precision, recall, F1-score, and AUC – tailored to the specific problem․

PDF guides frequently showcase code examples for calculating these metrics and visualizing model performance․ Avoiding overfitting requires careful monitoring of training and validation loss curves․ Proper validation confirms the model’s ability to perform reliably in real-world scenarios, preventing deployment of poorly generalized models․ Thorough evaluation builds confidence in the model’s predictive capabilities․